LLM Connector acts as a local OpenAI-compatible proxy backed by AWS Bedrock. You can connect dev tools that support local LLM modle such as Xcode 26, or Android studio.

- No Python/CLI setup — native app experience

- Visual configuration — port, region, auth method

- Live request logging — built-in console log viewer

- macOS-native credential handling — AWS CLI profile integration or API key

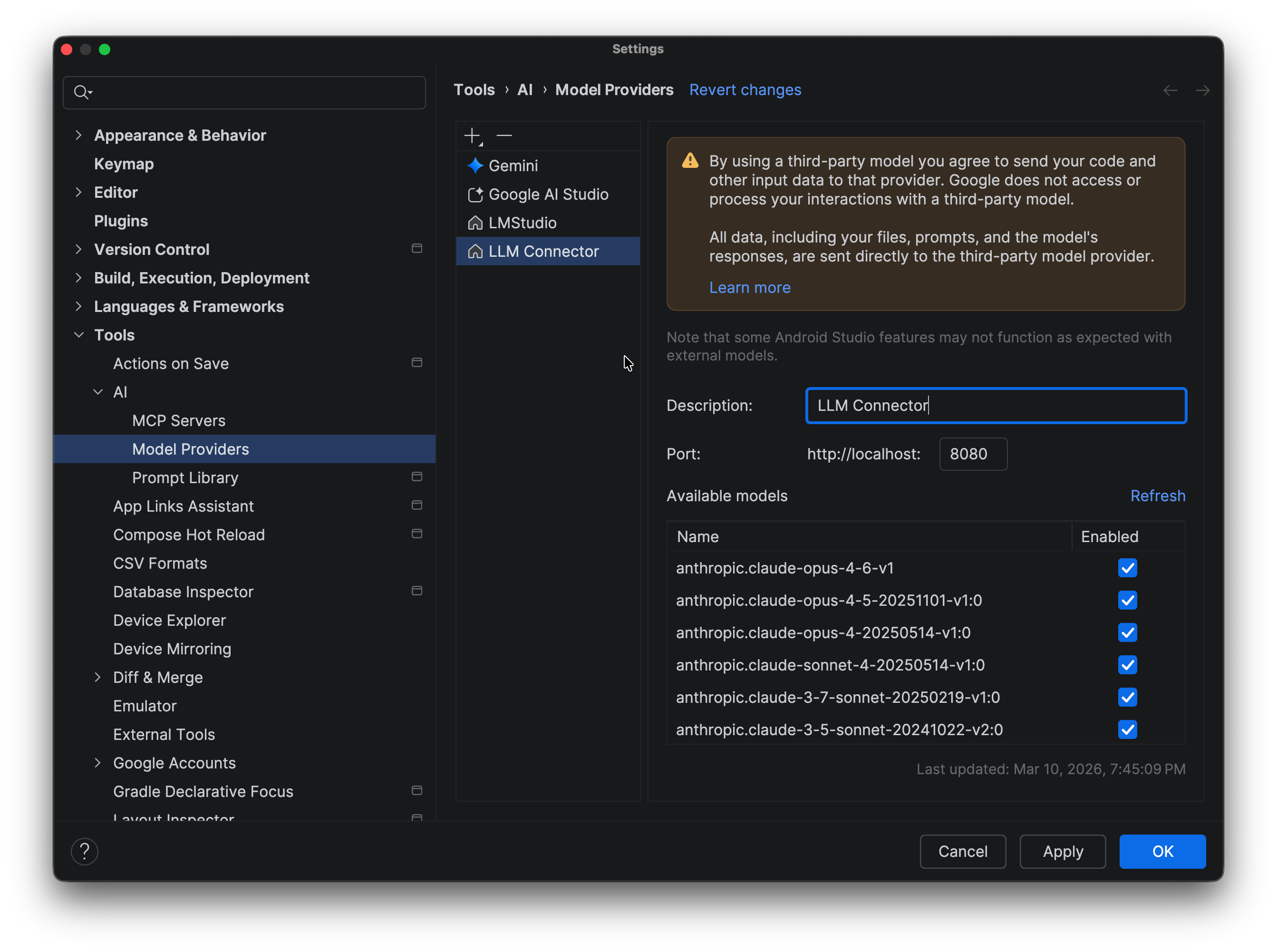

Image

AWS Bedrock account

It supports AWS Profile or API Key.

Profile - You need AWS CLI setting in your local machine. Test following command if your local setting works.

aws sts get-caller-identityAPI Key - You need to create an API Key from AWS console.

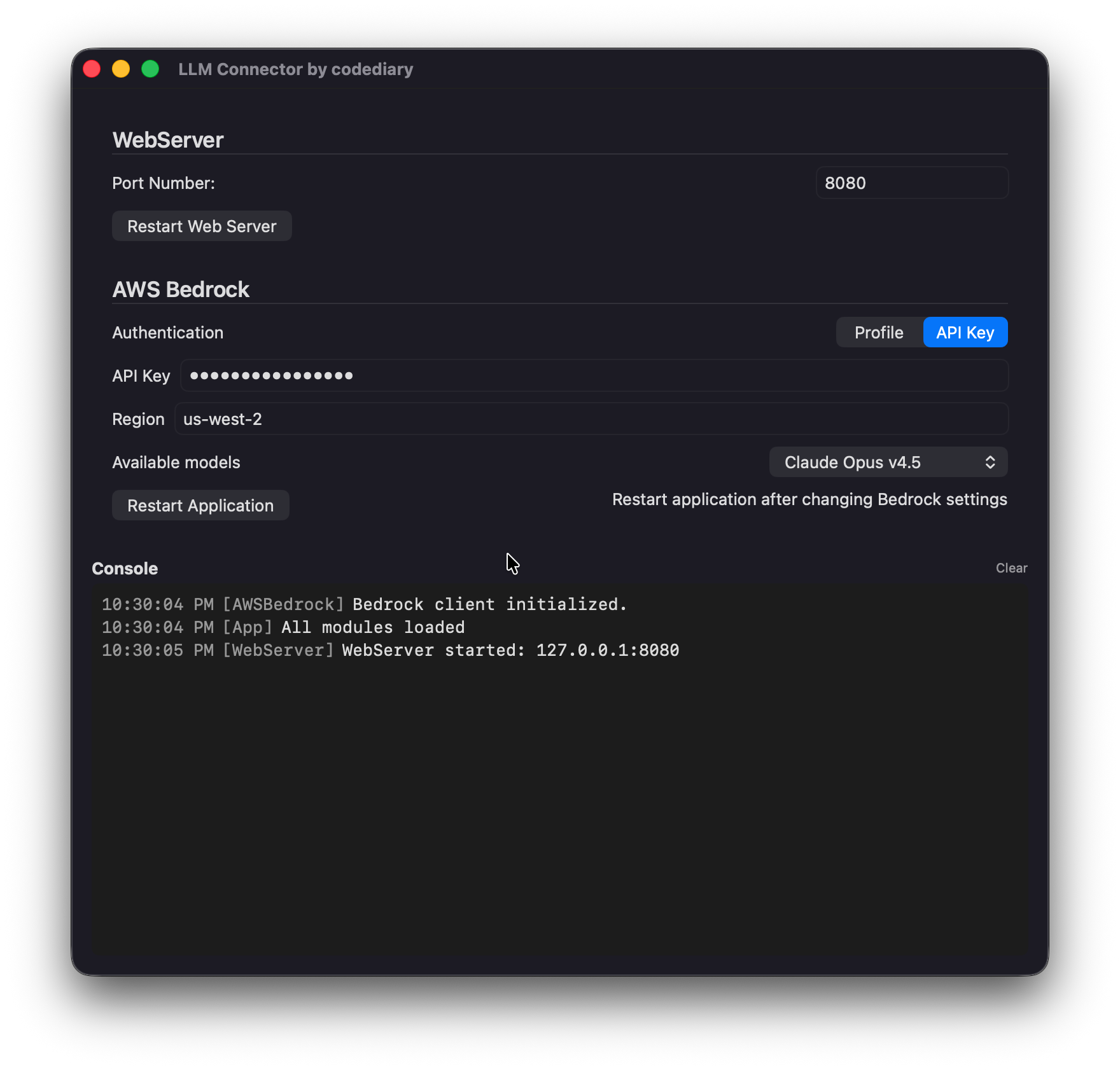

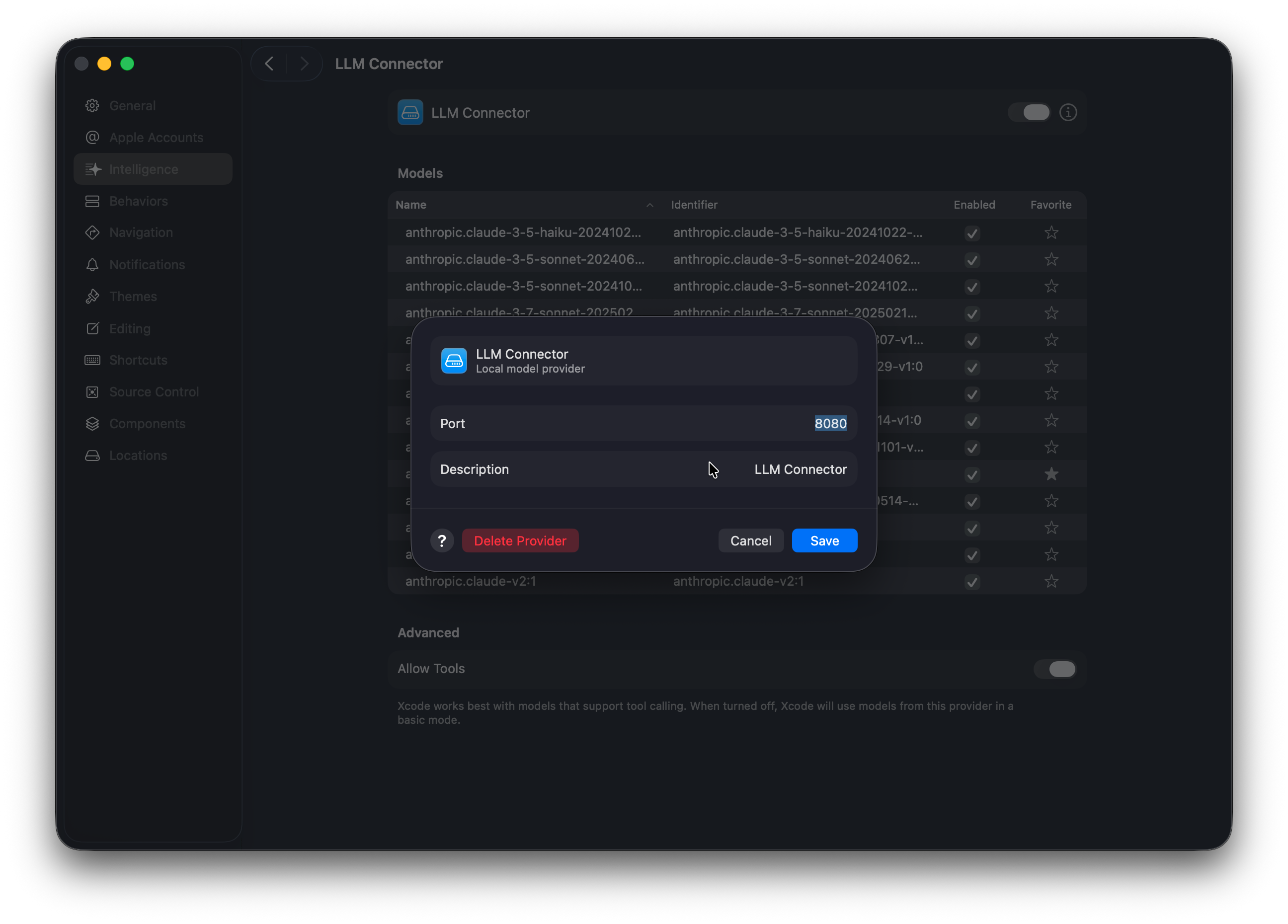

Xcode

You can connect LLM Connector as a Local Provider in the Xcode Intelligence Setting

Image

Image

Now, you can chat in the Xcode editor

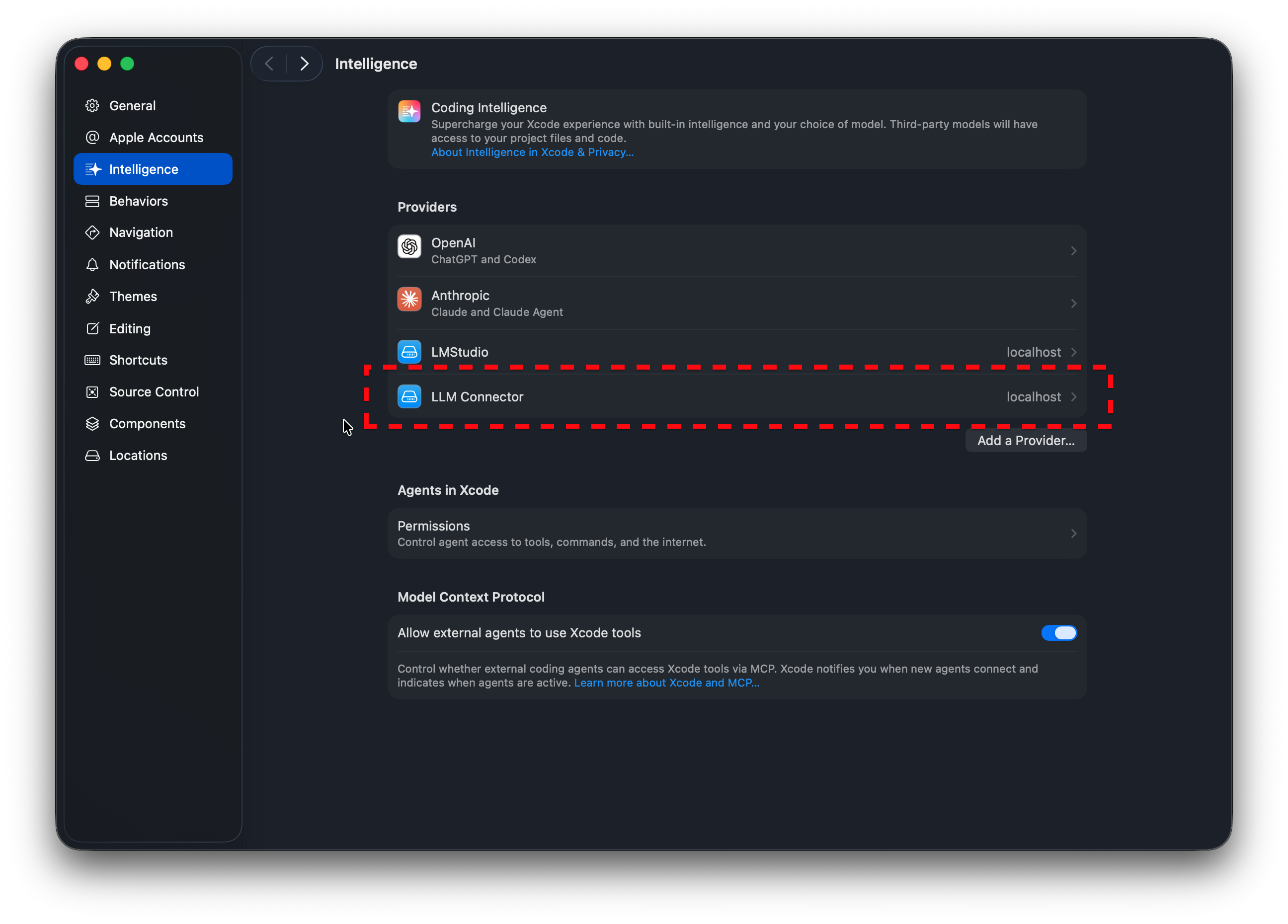

Android studio

You can connect LLM Connector as a Local Provider in the Android.

Image